Springboard wanted to introduce call recordings and AI-generated summaries so students and mentors could get more value out of mentor calls. But recording conversations raised concerns around privacy, trust, and legal requirements, and consent wasn’t something we could treat lightly.

From early research, we learned that students were generally excited about being able to revisit calls to learn and reflect. At the same time, both students and mentors had questions about who could access recordings, how long they’d be stored, and whether being recorded would change how conversations felt.

The challenge was figuring out how to introduce something new in a way that felt clear, respectful, and trustworthy from the start.

To move forward, I worked closely with Product, Legal, and Program teams to transform complex compliance requirements into an experience that felt approachable and fair.

I led student interviews and research synthesis, and partnered with my PM on mentor interviews.

Before jumping into UI, I started with decision flows to work through key questions: When should we ask for consent? How firm should the experience be? How do we handle users mid-program?

From there, I iterated through flows and edge cases, tested assumptions, and refined the experience with feedback until we had enough clarity to ship.

I partnered closely with Engineering during implementation, conducting design QA and UAT to ensure consent logic, timing, and edge cases worked as expected. We launched the consent experience for mentor call recordings in early January 2025.

We launched a consent experience for mentor call recordings across the product including onboarding prompts, in-product messaging, and consent-aware states that guided user decisions.

The experience exceeded student adoption goals, with 82% of students consenting against a 70% target. Mentor consent landed at 56%, lower than the goal, but consistent with what we heard in interviews, and showed that more mentor-facing value was needed.

Most importantly, this work created a trusted, compliant foundation that enabled call recordings and AI summaries to move forward. Consent shifted from a blocker to an enabler for everything that came next.

The takeaway: Clear, well-timed consent messaging introduced recordings without disrupting the tone of mentorship conversations.

We used our research to drive decisions in real time. I led student interviews and partnered with my PM on mentor interviews, then synthesized what we learned into early design directions.

Key insights:

These insights set a high bar for clarity and transparency, reinforced the need for user control, and pushed us toward a more predictable, non-disruptive experience.

Early research insights that directly shaped consent flows, tone, and enforcement decisions.

Before getting into UI decisions, I focused on defining how the system should behave.

Consent impacted scheduling, recording behavior, access, what users could do next. So I started by mapping flows and decision paths across different scenarios.

A few things shaped this work:

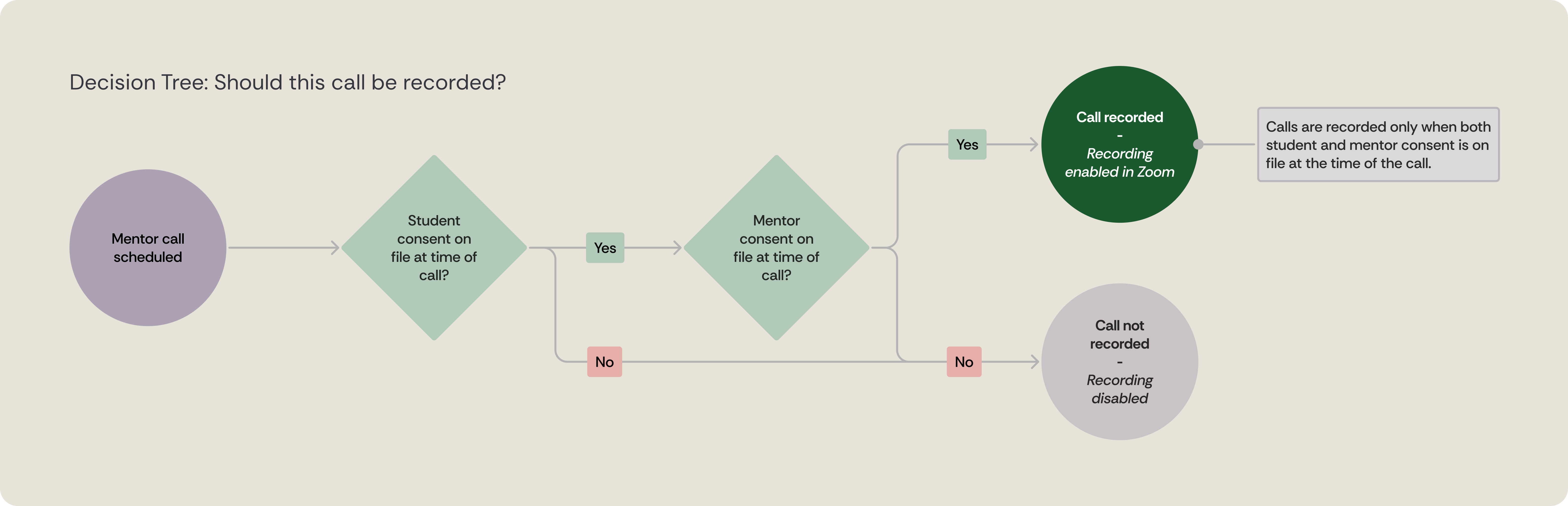

Decision logic for when mentor calls should be recorded.

Calls are only recorded when both student and mentor consent are on file at the time of the call.

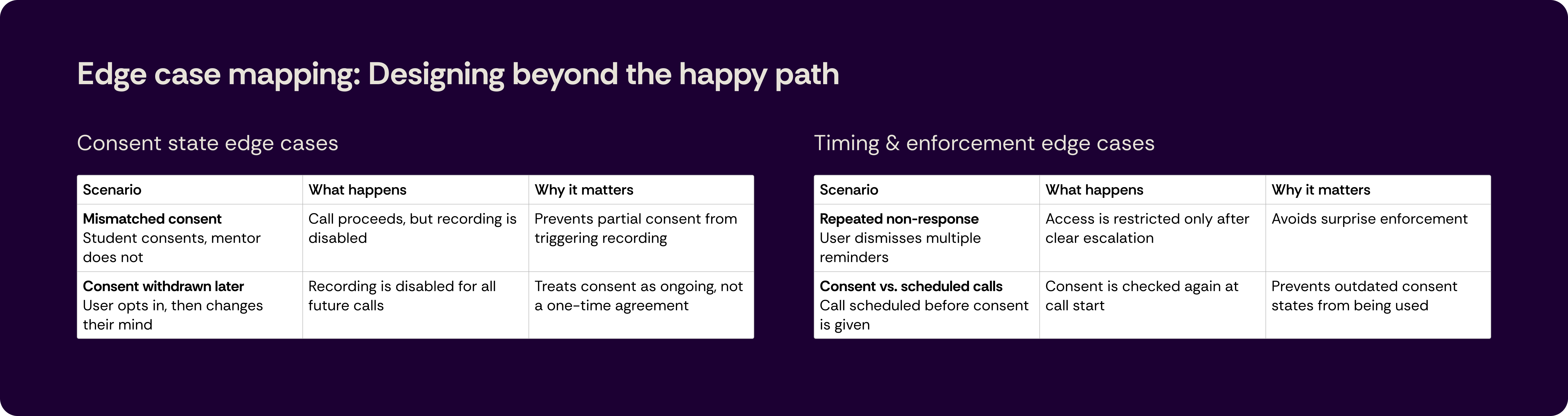

Designing beyond the happy path.

These edge cases helped ensure consent was handled safely, predictably, and without breaking trust.

Copy played a bigger role in this project. Because consent needed to be explicit and trustworthy, language directly shaped how the experience felt.

I started with placeholder text to define structure and intent, then refined it through collaboration with Program Success, Mentor, and Legal.

As the experience evolved, the copy went through multiple iterations. Even small wording changes impacted clarity, sense of control, and how comfortable users felt opting in.

My role was to design the experience so the copy could evolve safely over time, without breaking flows or creating compliance issues. Treating it as a core part of the design, not something added at the end.

This was one of the hardest parts of the project. Consent wasn’t optional, but being too heavy-handed risked damaging trust, especially for students and mentors already mid-program.

My challenge was finding the right balance between being clear and firm without feeling abrupt or hostile. Fully dismissible prompts didn’t meet legal needs. Jumping straight to locked states felt too aggressive.

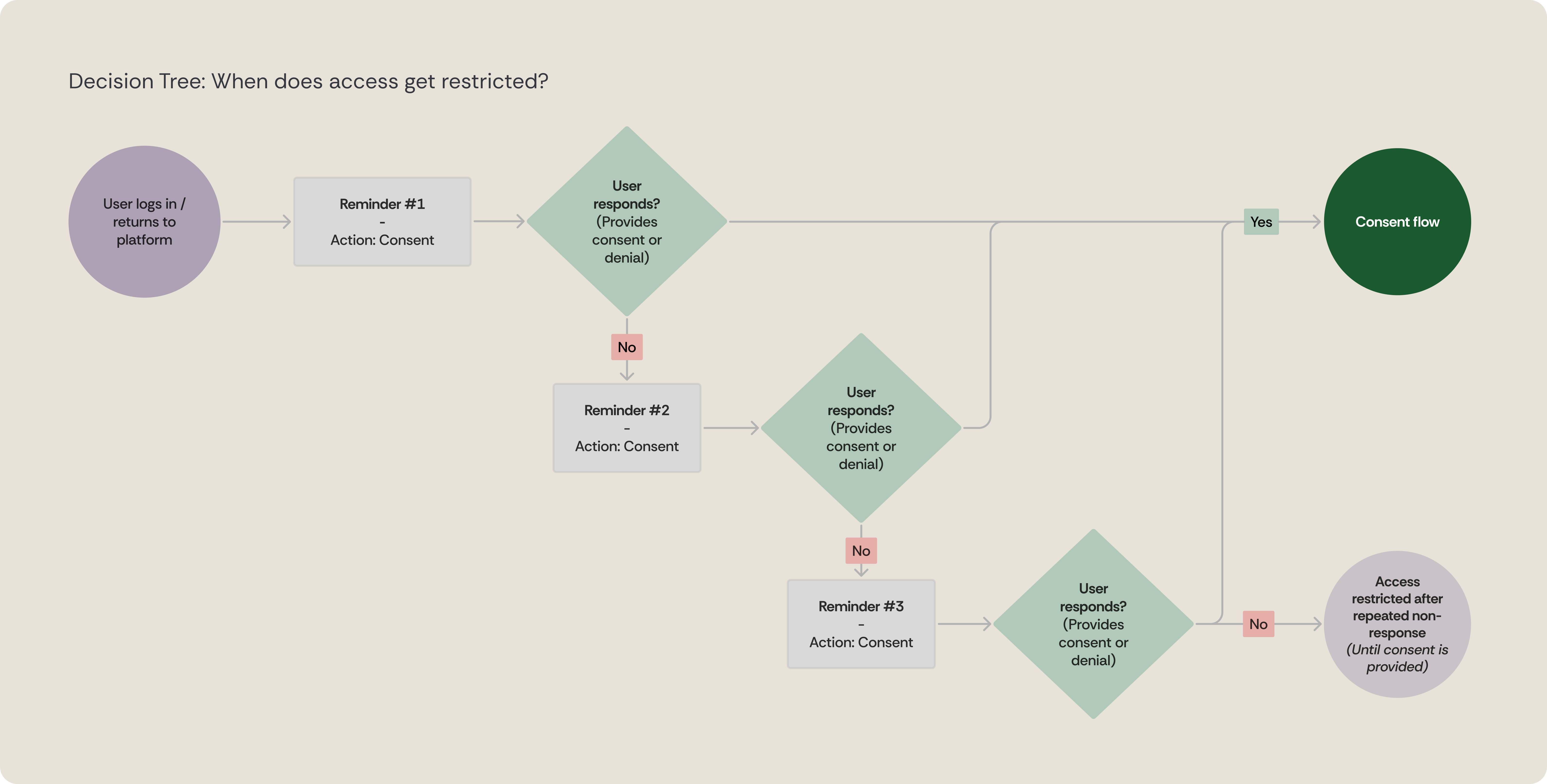

What we shipped instead was a gradual approach with clear explanations, limited ability to postpone, and reminders that escalated over time. The goal wasn’t just to enforce consent, but to guide people toward a decision in a way that felt predictable and respectful.

Progressive enforcement logic for consent non-response.

Access is restricted only after multiple reminders and repeated non-response, and is restored once consent is provided.

Rather than designing separate consent experiences, I created one shared system that adapted to context, placement, and language. Keeping the UI consistent reduced cognitive load, while small adjustments made it feel relevant to each role.

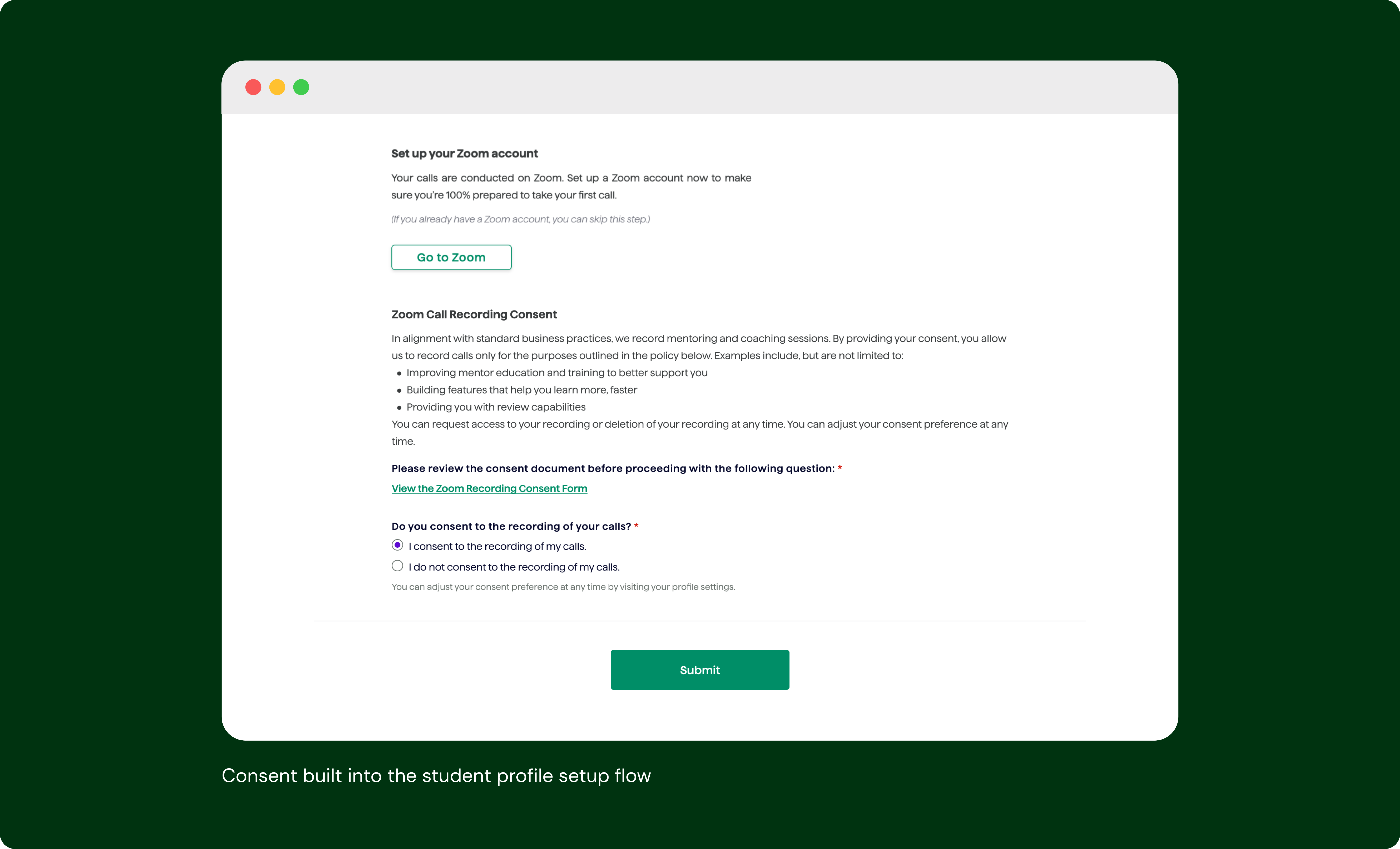

Consent was introduced later in the profile setup, after foundational information was completed.

For students, it was framed around learning and reflection. For mentors, the language addressed concerns around transparency and usage.

In both cases, the interaction stayed the same: clear consent, clear next steps, and the ability to opt in or out.

Progressive consent reminders before enforcement. Clear escalation helped avoid surprise restrictions while still requiring action.

Users could review the policy and make a decision as part of their normal workflow, rather than being redirected elsewhere.

Before launch, I partnered with engineering during UAT to run a design QA and make sure consent timing, reminder behavior, and edge cases worked as expected. We caught a few issues along the way, like reminder modals not triggering consistently in certain scenarios, but overall, the logic and experience held up well enough to move forward!

After shipping, the focus shifted to real-world behavior. Student adoption exceeded expectations, with 82% of students consenting against a 70% target. Mentor consent landed at 56%, lower than the goal, but consistent with what we heard in research. Rather than treating launch as the finish line, we used these results as a signal: the student experience validated our approach, while mentor adoption highlighted where future phases needed to focus.

This project wasn’t meant to stand alone. Designing consent was the necessary first step to responsibly unlocking everything that followed, including access to call recordings, AI-generated summaries, and deeper insights into mentor-student interactions. With trust and compliance in place, the team could move forward without revisiting the same foundational questions.

Once consent was established, the conversation shifted from whether we could record calls to how recordings could actually support learning and mentorship. This was the prerequisite for the next phase of the roadmap and set the stage for designing the recording and summary experiences that came next.

This work established the foundation for introducing recordings into the product. In the next phase, we focused on helping students and mentors get more value from their calls with summaries and recording access.

Next Up